Physical AI

When algorithms become capable of action in production

The short answer

Physical AI (often also referred to as "embodied AI") marks the point at which artificial intelligence leaves the purely digital world. It combines perception, planning and physical action in real environments.

In contrast to classically programmed systems, these machines act in a closed loop: They record their environment via sensors, make autonomous decisions and carry out actions in real time.

Many companies have already recognized the potential of generative AI in their administrative processes. However, the actual operational value creation in manufacturing companies and in logistics takes place away from the screens. It is precisely here, on the store floor, that automation will reach a critical turning point in 2026, known as Physical AI.

Classic industrial robots work with high precision, but blindly. As soon as the reality deviates from the programmed standard because a component has slipped or the light falls differently, faults occur.

Physical AI solves precisely this problem by enabling machines to understand their environment and react according to the situation. For decision-makers in operations and production, it is now a matter of turning impressive technology demos into resilient and repeatable processes for their own company.

5 key takeaways

- The next level of maturity: Physical AI is much more than a camera linked to a chatbot. It is the deep interaction of models, robotics and industrial execution.

- Sim-to-Real as a foundation: The behavior of the systems is trained in simulations and digital twins before it is safely transferred to the real factory floor.

- Pragmatism before hype: While corporations are testing humanoid robots, the most realistic entry point for SMEs is often AI-supported image processing (vision) paired with local edge automation.

- A clear business case: today, their use is particularly worthwhile for repetitive, physically demanding tasks or in high-precision quality inspection.

- Step-by-step integration: The path to practical application does not begin with fully autonomous factories, but with narrowly defined use cases, clear termination criteria and humans as the final control authority.

What is Physical AI really?

Short answer:

Physical AI combines AI models with physical hardware (robotics, sensors) in order to act autonomously in the real world in real time. The system goes through a constant cycle of perception, decision-making and action, with training taking place in advance in secure digital simulations (sim-to-real).

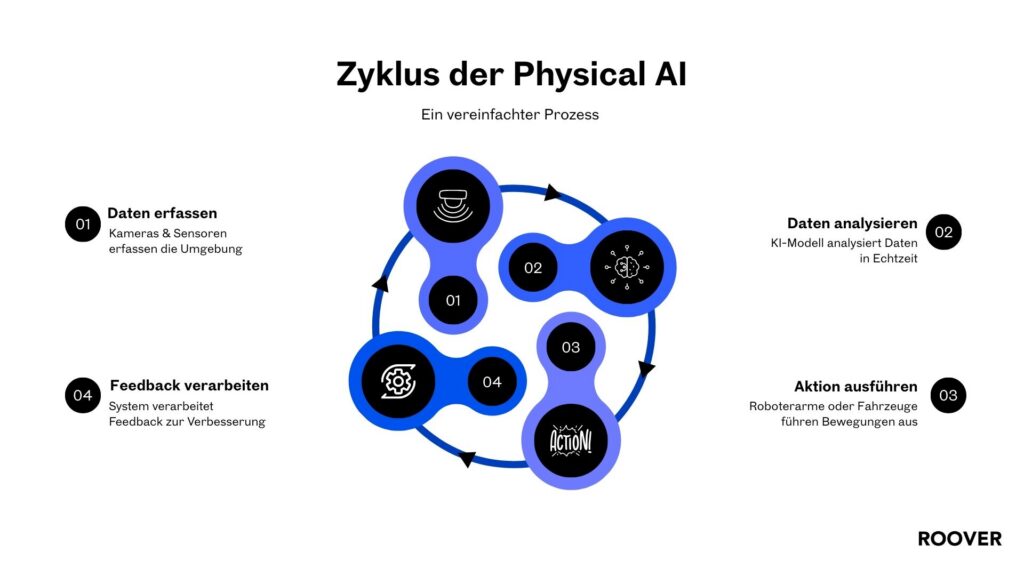

Physical AI describes systems that not only analyze data or output content, but also intervene directly in the physical world. This happens in a continuous cycle:

- Firstly, cameras and sensors capture the real environment.

- Secondly, an AI model analyzes the data in real time and plans the next step.

- Thirdly, robotic arms or vehicles carry out the movement.

- Fourthly, the system processes the direct feedback from this action in order to learn from it.

In this context, Nvidia coined the term “Generative Physical AI”. The technological definition gets to the heart of the development:

“Physical AI models must not only understand language or images, but also grasp the physical laws of the real world – such as gravity or friction – in order to perform complex tasks autonomously.”

The decisive factor in practice is that such systems are not taught directly on the assembly line. The behavior is trained and validated in photorealistic, physically correct simulations (digital twins) before it is safely transferred to the real factory floor. This “sim-to-real” approach is the actual foundation for safely scaling AI hardware.

Why physical AI will achieve operational relevance in 2026

Short answer:

The technology is ready for the store floor in 2026 because hardware is becoming more affordable, industrial robotics is reaching record numbers worldwide and the major technology players are now supplying the appropriate, industrial-grade software stacks for safe training in digital twins.

The fact that Physical AI is leaving prototype status and is now arriving on the store floor is due to three sober, reinforcing drivers:

Firstly, robotics continues to scale. Around 542,000 new industrial robots were installed worldwide in 2024 – an absolute record level. Asia accounts for a very large proportion of this, with China as the strongest market (Asian share of around 74%). The physical hardware base in the factories is therefore growing massively, becoming more robust and increasingly affordable.

Secondly, the bottleneck logic is shifting. With physical AI, the real problem today is rarely finding a suitable foundation model. The real bottleneck lies in data, training and validation. To train and test behavior safely in industry, companies need simulations, synthetic data and reproducible test environments. This is precisely why platforms and stacks for simulation and robotics training are currently pushing so strongly onto the market.

Thirdly, the stack is becoming more industrialized. Major technology players are making the infrastructure suitable for practical use. NVIDIA is explicitly positioning “Physical AI” as the next technological wave after text-based generative AI and is linking the topic directly to industrial robotics tooling.

Fewer discussions.

More implementation.

We bring in structure and start with the most sensible step.

Practical example 1: Humanoid assistance for physical routines at Mercedes-Benz

In the automotive sector, the use of humanoid robots for physically demanding tasks is being tested intensively. One striking example is provided by the Mercedes-Benz Group, which is piloting humanoid robots from the manufacturer Apptronik (Apollo model) in its factories.

The focus here is on material handling, such as lifting and moving containers (Totes), as well as direct logistical support in production.

What does this mean in concrete terms for business benefits?

For those responsible for operations, it is not the visual “wow moment” that is decisive here, but the operational core: the selected tasks are repetitive, physically demanding, clearly definable and measurable. Physical AI is aimed at concrete metrics here – stable cycle times, lower error rates and, above all, ergonomic relief for human specialists who can be deployed for more demanding tasks.

Practical example 2: Tactile robotics for complex handling by Agile Robots

The Munich-based company Agile Robots shows that physical AI is also revolutionizing classic robot arms. Here, digital intelligence merges with physical execution through the use of highly sensitive force-torque sensors in the robot joints.

AI enables the robot not only to “see” via cameras, but also to “feel” physical resistance with high precision. This makes it possible to automate assembly processes that previously had to rely on human intuition.

Why is this relevant?

In practice, this means that machines can now take on tasks such as inserting flexible cables or joining components with minimal tolerances. If a component tilts, Physical AI senses the resistance, aborts the rigid movement and corrects the angle autonomously – without any manual intervention by a programmer. This creates completely new degrees of freedom for collision-sensitive robotics.

Do you need help with your Physical AI?

Practical example 3: AI vision and edge automation at Audi

Physical AI does not necessarily need a humanoid body. The combination of intelligent image processing (AI vision) and direct machine control at the edge (i.e. locally directly at the machine, without error-prone cloud latency) often offers the fastest and most economical entry into AI process automation in 2026.

Audi is demonstrating this in cooperation with Siemens during optical inspection in car body production. The system analyzes weld seams in real time, detects fine weld spatter and controls its removal directly and automatically in the same work step.

What should companies look out for here?

This is a real blueprint for medium-sized companies. It is a controlled environment with crystal-clear quality criteria. The ROI leverage is extremely high, as errors are rectified at an early stage before the component moves on in the process. Such edge use cases are ideal for building trust in the technology, as the process is monitored closely and in a safety-compliant manner.

The way into practice: How companies get started

Short answer:

Avoid expensive, isolated robotics experiments. The pragmatic start is modular: Start with AI-supported image processing, link it to simple actions and define crystal-clear safety rules for handover to humans.

Attempting to anchor the topic in the company by buying a robot without thinking about it almost inevitably leads to expensive, isolated pilot projects (pilot purgatory). The strategically smarter way to use AI in SMEs is modular.

Start with building blocks that can be scaled later:

- Vision: Implement camera systems and AI that reliably capture the process (defect detection, parts inspection).

- Do: Link the findings to existing handling systems or actuators that physically intervene based on the data.

- Stay safe: Define absolute limits and governance rules. When does the system stop? When does a person have to take over?

To get started, choose one or two use cases where the data situation is solid. The focus must be on continuously improving the system: Evaluate results, highlight errors and tighten up the rules in real operation.

Conclusion: The era of the "Physical AI Economy" begins

Physical AI brings the cognitive capabilities of artificial intelligence into the material world. Systems no longer have to be programmed for every eventuality, but instead learn to deal with variances.

Current trend reports, supported by the record figures of the IFR World Robotics Report 2025 and strategic analyses by NVIDIA, clearly classify this development: Physical AI forms the core of the emerging “physical economy”. The industrial AI of 2026 will no longer remain in the browser – it will intervene directly in the action.

But technology alone does not solve operational problems. Success depends on how well AI is integrated into existing processes, security policies and the IT architecture. This requires the establishment of a true AI-first organization in which employees learn to control and orchestrate AI-supported systems as tools.

A well-founded AI strategy for SMEs in 2026 starts right here: It defines the clear business case before investing in hardware. In order to combine technological feasibility with economic benefits, implementation-oriented AI strategy consulting is often the decisive success factor.

If you want to put the topic on the agenda internally and pick up decision-makers from a technical perspective, a compact AI keynote speech is the right format to dispel myths and sharpen the focus on real, physical implementation.

FAQ - Physical AI

Generative AI (such as ChatGPT) creates texts, images or code on a screen. Physical AI, on the other hand, couples AI models with sensors and robotics to autonomously perform physical actions in the real world – such as gripping components or driving through a warehouse.

No. For most medium-sized companies, the combination of intelligent camera sensor technology (AI vision) and classic industrial robots or local edge controllers (as in the Audi example) is the fastest, safest and most economical way to get started.

Security (governance & compliance) has top priority. The behavior of the systems is tested in advance in digital twins using the “sim-to-real” approach. Strict termination criteria apply in practice: The AI may only act within defined parameters, and humans always remain the final controlling authority.

The basis is a networked data infrastructure. Sensors, systems and IT systems (such as ERP or MES) must be able to communicate with each other. Without clean process data and a modern IT architecture, Physical AI cannot react effectively in real time.

You might also be interested in

Generative search is quietly but fundamentally changing the rules of the game. ChatGPT, Gemini and other systems no longer provide classic link lists, they respond directly. Often based on just a few sources classified as particularly trustworthy. The central question is therefore no longer: How well do I rank?but: Is

The 25 most important AI terms explained simply generated by Midjourney Which AI terms should you know in order to have a say? Artificial intelligence (AI) has made significant progress in recent years and plays a key role in many areas of our lives. From machine language processing to image

The top 20 most important AI terms in 2026 are primarily terms that clarify two things: How AI is anchored in the organization (strategy & roles) and how AI is operated safely and effectively (governance/compliance + tech stack). Those who master these terms can plan AI initiatives more realistically, manage